The European Commission has unveiled a digital age verification app designed to let users prove they are over 18 without sharing personal data. President Ursula von der Leyen has promoted the tool as a free, privacy-first solution for platforms struggling to meet child-safety obligations under the Digital Services Act. Yet within days of its April debut, a video demonstrated how the prototype could be bypassed in under two minutes. The Commission issued a patch and described the flaws as typical of a prototype.

A Long-Awaited but Insufficient Step

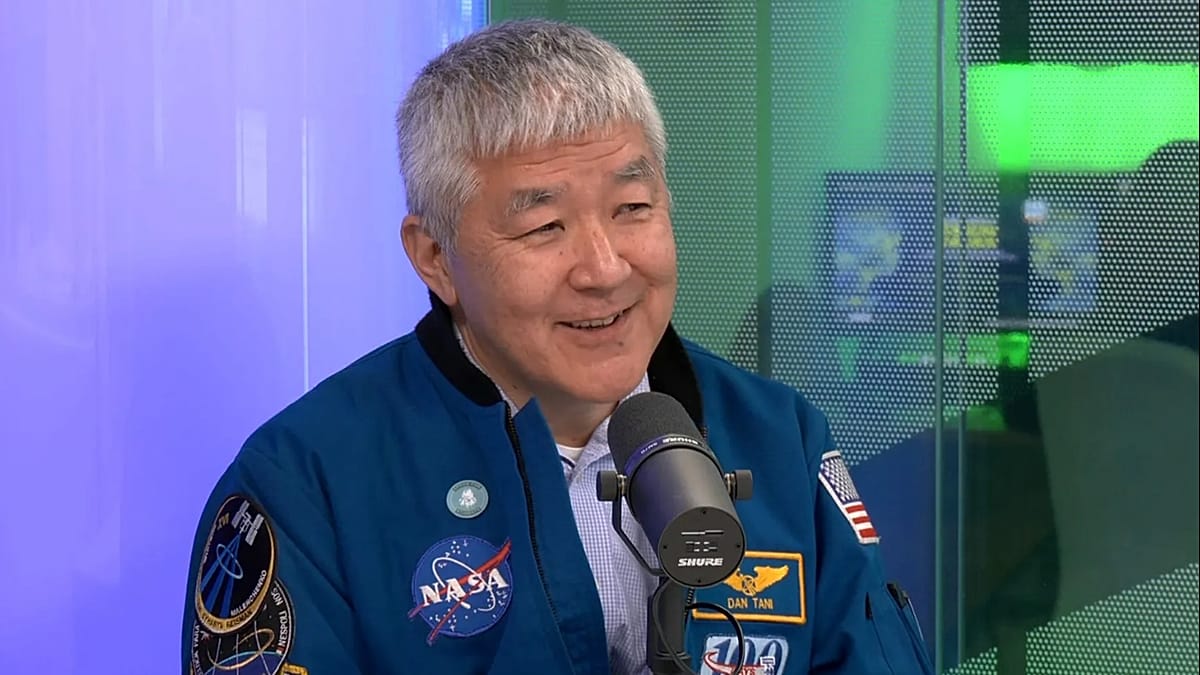

MEP Christel Schaldemose, rapporteur for the European Parliament's call for a harmonised EU-wide minimum age of 16 on social media, welcomed the app as a first step but expressed frustration over the timeline. Von der Leyen announced an expert panel on children's digital safety in September 2024, but by April 2026 it had barely begun work. "From September until April is quite a long time," Schaldemose told Euronews. "I don't know if they're delaying on purpose, but I do think that they are too slow on this."

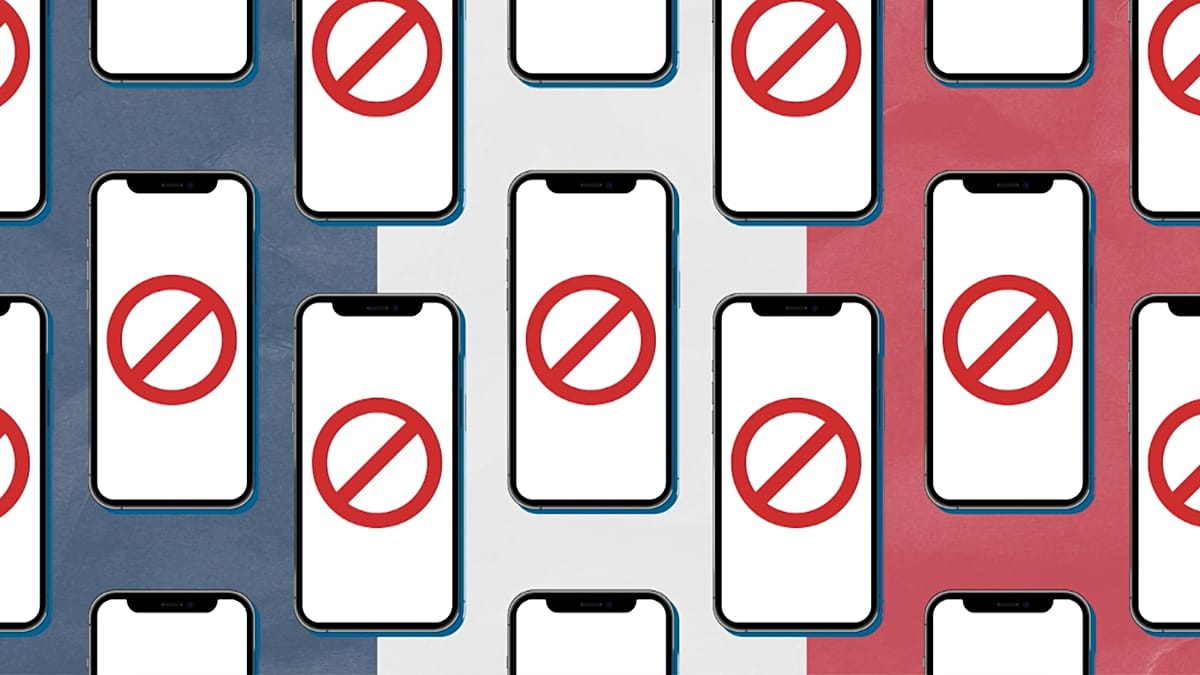

The delay has real consequences. Several member states, including France, Spain, Greece, and Ireland, have already enacted their own age restrictions and social media rules, creating the fragmented regulatory landscape the EU was meant to prevent. Schaldemose warned that without harmonised verification tools, young people will use VPNs to bypass national rules, undermining protection across the bloc. This issue is part of a broader trend, as EU nations enact social media bans while Brussels prepares its tool.

A Quick Technical Fix?

Child rights advocates question whether the app addresses the real sources of harm. Francesca Pisanu, EU Advocacy Officer at Eurochild, welcomed the Commission's infrastructure but cautioned against seeing the app as a silver bullet. "The app should not be seen as a silver bullet, but as one tool within a much broader child-rights-based approach," she said. "If it is presented as the solution, there is a real risk that it becomes a quick technical fix to a structural problem."

That structural problem, according to Eurochild, lies not in children's access to platforms but in the platforms themselves. Recommender systems, behavioural advertising, engagement optimisation, and addictive design are the mechanisms generating harm. An app that checks a user's age at the door leaves all that intact. Pisanu argues that the public debate focuses too much on restricting access and not enough on redesigning harmful systems.

Privacy Promises Under Scrutiny

The Commission insists the app meets the highest privacy standards. Users verify their age with a passport or ID card, but the platform receives only a yes-or-no confirmation, not personal details. The design uses zero-knowledge proofs and is open source. However, privacy advocates remain unsatisfied. Identity documents must still be scanned, third-party integrations widen potential data exposure, and poorly managed tokens or logs could link users across platforms. The Commission has not directly addressed these concerns.

Schaldemose is less alarmed about privacy than she once was. "Two years ago, we might have had some issues on data protection, but the tools developed today are made in a way where that problem is tackled," she said. Still, she added a sharp caveat: "If you're so afraid of it, then you shouldn't use the platforms, because they have your data no matter who you are." Pisanu draws a hard line, insisting that any age assurance system must be "privacy-preserving, reliable, robust, accurate, proportionate, and backed by wider safeguards."

Platforms Remain the Core Problem

Experts agree that Brussels has failed to hold platforms primarily accountable. Schaldemose said of addictive design features: "They know it is harmful and they should stop the practices already today. They earn quite a lot of money, it's visible, you can look at their earnings. So, I think they can afford it." Pisanu echoed this, stating that tech companies design and profit from these environments. "The responsibility should lie first and foremost with tech companies, because they design and profit from these environments," she said. "Parents can play a crucial role, but they cannot be expected to carry primary responsibility for managing risks created by powerful commercial systems."

The Commission's app could ease compliance and reduce data exposure, but without binding rules on platform design, algorithmic transparency, and accountability—and without a mandatory, unified framework to replace patchwork national laws—it remains a technical fix, not a complete political strategy. As Schaldemose put it: "We cannot wait any longer for a signal from the Commission about what they expect to do."