A sophisticated disinformation campaign is deploying artificial intelligence to fabricate videos of Ukrainian soldiers, aiming to fracture morale and public trust in Kyiv's military command. According to a new analysis by forensic AI detection firm Sensity AI, pro-Russian actors are creating and disseminating deepfake content designed to mimic authentic frontline footage, with the strategic goal of portraying Ukraine as weak and its leadership as failing.

The Anatomy of a Deepfake Campaign

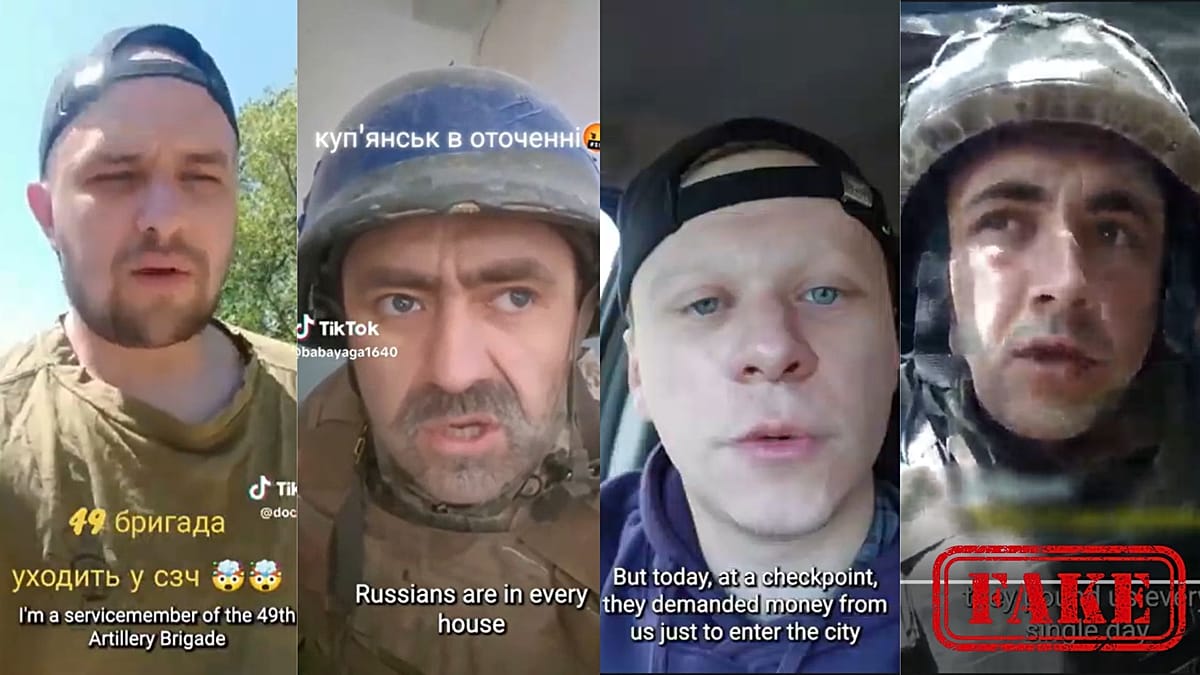

Researchers have identified a core dataset of 60 videos definitively manipulated by AI, part of a broader ecosystem of over 1,000 similar clips. These forgeries often show soldiers in dire situations—panning across trenches purportedly filled with casualties—or delivering scripted critiques of their own chain of command. In one example, a digitally generated soldier states, "I don't want to serve with them. And I don't need them either." The videos employ AI-generated faces and synthetic voices, and in some cases, blend authentic footage with fabricated elements, making detection more difficult.

The dissemination follows a deliberate pattern. Content is first uploaded to platforms like TikTok and Telegram from anonymous or newly created accounts. Once initial engagement is secured, the videos are reposted onto X, Facebook, Instagram, and YouTube, where algorithms can amplify emotionally charged material. This multi-platform strategy ensures maximum visibility.

"The real danger of these videos on platforms such as TikTok and Meta is not simply that some users may believe them; it is that they can shape perception at scale, inject confusion into fast-moving events, and gradually erode trust in what people see," said Sensity's Founder, Francesco Cavalli.

From Social Media Feeds to Kremlin-Linked Outlets

The campaign's lifecycle extends beyond social media algorithms. Sensity reports that once a narrative gains traction online, it is frequently picked up by pro-Russian military bloggers or pro-Kremlin media sites, such as the Pravda network. This creates a deceptive feedback loop, where content originating on fringe platforms is laundered through more established—though biased—channels, lending it an air of credibility.

The narratives pushed are consistent: depicting utter despair on the frontline, alleging incompetence or absence from Ukrainian military leadership, and highlighting supposed mismanagement. A particularly insidious thread involves normalizing surrender, with fake soldiers claiming abandoning their post is the only logical option.

This digital offensive occurs against a backdrop of real-world information warfare, where European societies are also grappling with the weaponization of technology. Just as authorities in Marseille recently confronted the use of social media to organize protests, the conflict in Ukraine demonstrates how digital tools can be repurposed to manipulate public sentiment and challenge institutional authority.

The European Union has long identified hybrid threats—combining cyber attacks, disinformation, and pressure on critical infrastructure—as a primary security challenge. This deepfake campaign against Ukrainian morale is a textbook example of such a threat, leveraging cutting-edge technology to achieve strategic psychological objectives. It underscores the urgent need for robust media literacy and advanced detection capabilities across the continent.

For Ukraine's allies in the EU and across Europe, from Berlin to Warsaw and Helsinki to Lisbon, the campaign is a stark reminder that modern conflict extends far beyond the physical battlefield. Defending democratic discourse requires vigilance not just against traditional propaganda, but against AI-generated fabrications designed to demoralize and divide.