OpenAI has released GPT-5.5, its newest artificial intelligence model, which the company describes as its “smartest and most intuitive model yet.” The model is designed to better interpret user intent and handle complex, multi-step tasks such as writing and debugging code, analyzing data, and generating documents and spreadsheets.

Unlike earlier versions, GPT-5.5 can plan its approach and continue working until a task is completed, reducing the need for users to break down instructions into multiple prompts. OpenAI says this makes the model particularly valuable for coding, routine office work, and early-stage scientific research.

Performance and Availability

In internal benchmarks, GPT-5.5 outperformed its predecessor, GPT-5.4, on coding tests that measure complex software tasks, including command-line operations and real-world GitHub issue resolution. The model is being rolled out from Friday to Plus, Pro, Business, and Enterprise users via ChatGPT and Codex, OpenAI’s coding tool. It will also be accessible through an Application Programming Interface (API), allowing developers and companies to integrate it directly into their applications. OpenAI has not specified exact timelines or regions for API availability.

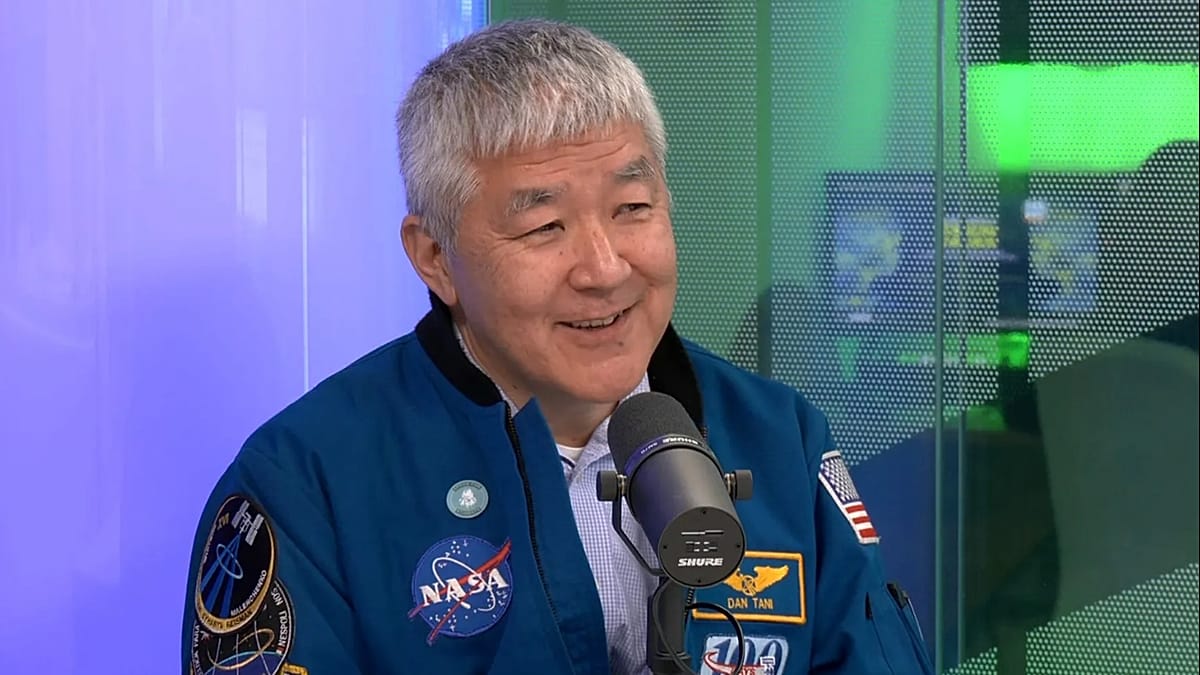

The company says GPT-5.5 includes its “strongest safeguards to date” and was tested by nearly 200 early-access partners, including firms in software, finance, communications, drug discovery, and scientific research.

Broader AI Landscape

The launch comes amid heightened concerns about the safety and control of increasingly powerful AI models, as tech companies race to outpace each other. Earlier this month, OpenAI’s competitor Anthropic unveiled Claude Mythos Preview, a model the company described as too dangerous for full public release. Mythos can identify thousands of previously unknown, high-severity vulnerabilities across major operating systems and web browsers. Shortly after, OpenAI released GPT-5.4 Cyber, a variant of its flagship model with fewer restrictions on cybersecurity-related queries for legitimate defensive purposes.

These developments reflect a broader trend in the AI industry, where companies are balancing innovation with safety. In Europe, regulators are closely watching these advances. The European Union’s AI Act, which entered into force in August 2024, imposes strict requirements on high-risk AI systems, including those used in critical infrastructure, law enforcement, and employment. OpenAI’s latest model, with its enhanced capabilities, will likely face scrutiny under these rules, particularly regarding transparency and accountability.

European tech firms are also active in the AI space. For instance, Elon Musk's xAI explored a partnership with France's Mistral to challenge OpenAI, highlighting the competitive dynamics. Meanwhile, OpenAI launched GPT-Rosalind, a model tailored for European biotech research, signaling the company’s interest in the continent’s scientific community.

The release of GPT-5.5 also raises questions about data privacy and workplace monitoring. Meta monitors employee keystrokes and clicks to train AI models, a practice that has drawn criticism from privacy advocates. As AI becomes more integrated into office workflows, European companies will need to navigate these ethical and legal challenges.

In the cybersecurity domain, the breach of Anthropic's high-risk Mythos AI model underscores the vulnerabilities even advanced systems face. OpenAI’s focus on safeguards with GPT-5.5 is a response to such risks, but the effectiveness of these measures will be tested in real-world deployments.

Overall, GPT-5.5 represents a significant step forward in AI capabilities, but its impact will depend on how it is adopted and regulated across Europe’s diverse markets. From Berlin’s tech startups to Paris’s research labs, the model could accelerate productivity and innovation, provided it meets the continent’s stringent standards for safety and ethics.